As AI use becomes normal, the real question is no longer who wrote every sentence. It is who directed the process, verified the claims, corrected the errors, and stands behind the result.

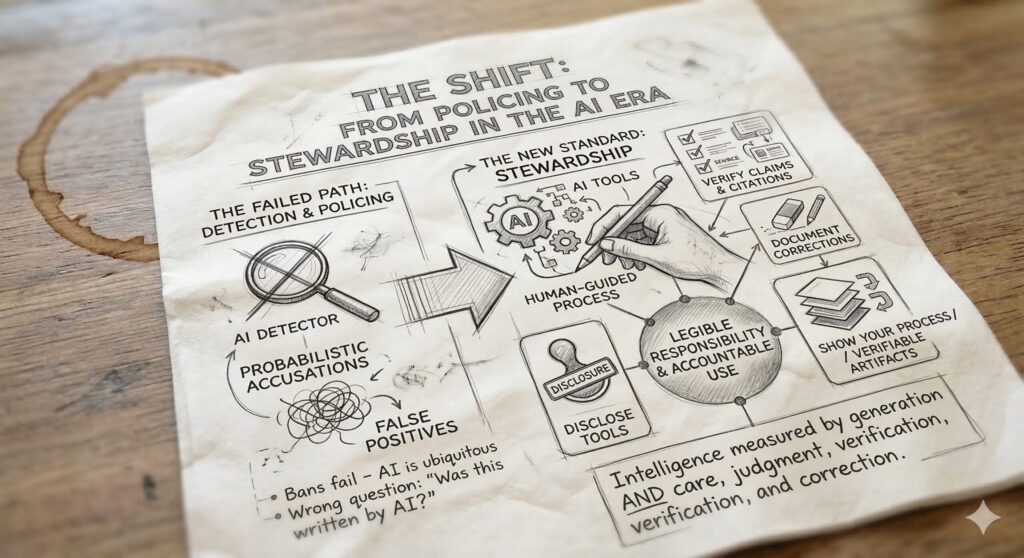

Universities are being pushed into a false choice: ban AI, or try to detect it.

Both paths fail.

Bans fail because students already live inside an environment saturated with AI. The tools are embedded in search, writing assistants, coding environments, note-taking systems, and everyday software. A ban may sound clear on paper, but in practice it becomes inconsistent, selective, and difficult to enforce.

Detection fails for an even deeper reason. It asks the wrong question. “Was this written by AI?” might once have looked like a meaningful line, but that line is collapsing. Real work is increasingly hybrid. A student may use AI to brainstorm, outline, paraphrase, translate, debug code, summarize readings, or pressure-test an argument. Another may write the first draft alone and use AI only for revision. A third may use AI heavily but then spend hours checking claims, replacing weak sources, and restructuring the logic.

The final text alone cannot tell that story.

That is why the educational debate needs a new center of gravity.

The missing standard is stewardship.

Stewardship shifts the focus away from the fantasy of untouched authorship and toward the reality of accountable process. It asks not whether AI touched the work, but whether the human being used it responsibly. Who guided the system? Who checked the claims? Who corrected the errors? Who disclosed the tools? Who accepted responsibility for the final submission?

That is the right question for the AI era.

A steward is not merely the originator of words. A steward is the one who remains responsible for truth, meaning, and consequence. Stewardship means that intelligence is measured not only by generation, but by care, judgment, verification, correction, and accountable use.

This is where much of the public conversation still falls short. We are trapped in a policing model built for a world that is disappearing. Detection systems try to infer authorship from the surface of a document. They produce probabilities, not understanding. They encourage suspicion, not learning. They generate false positives, adversarial dynamics, and endless procedural disputes.

Education should not be organized around probabilistic accusations.

It should be organized around legible responsibility.

That shift matters for another reason: the deeper problem is not only misuse, but drift. Systems trained recursively on synthetic outputs tend to recycle already-compressed patterns. They remain fluent, but often become thinner. They preserve form while losing depth. They are more likely to reproduce what is already statistically nearby than to reach toward what is newly true.

This is one reason human beings remain essential.

Not because humans are magically pure. Not because every human draft is better than every machine draft. But because humans can still inject novelty at a deeper level: a new hypothesis, a new distinction, a fresh counterexample, a bridge between two fields, a moral priority, an intuition grounded in lived reality, a question no dataset was already optimized to ask.

A purely synthetic loop tends toward closure. Human contact reopens the loop.

Reality comes in layers. Surface language is only one of them. Beneath the visible text are deeper structures: evidence, models, assumptions, values, experiments, corrections, interpretations, and contact with the world itself. When AI operates only at the surface layer, it can become eloquent but hollow. When it is tied to deeper layers, to grounding, executable checks, source review, and human judgment, it becomes part of a disciplined process rather than a simulation of one.

That is why stewardship is not just an ethical slogan. It is an epistemic standard.

In education, this means we should stop asking students to prove they worked alone and start asking them to show how they worked well.

Did they disclose which tools they used?

Did they verify factual claims and citations?

Did they revise weak arguments instead of laundering them through more polished language?

Did they document corrections?

Did they improve the work across iterations?

Can they explain and defend the final result?

These are educationally meaningful questions. They train judgment instead of fear.

They also align with the direction many institutions are already moving. The emerging norm is transparency: disclose the use of AI, specify how it was used, and keep the human agent responsible for the outcome. But transparency by itself is not enough. A vague disclosure paragraph is still too weak. “I used ChatGPT for brainstorming” says very little about whether the resulting work was careful, truthful, or rigorous.

What education needs is verifiable stewardship.

That means durable process artifacts, not just declarations. A serious stewardship framework should make the work legible. It should show what checks were run, what issues were found, what was corrected, and how the final submission differs from the initial AI-assisted draft. It should make room for hybrid work without reducing integrity to a binary between innocence and cheating.

This is the practical meaning of the shift from policing to stewardship.

Detection says: prove you did not use the machine.

Stewardship says: show how you used it, what you checked, what you changed, and why the final work deserves trust.

That is a far more defensible standard, pedagogically and institutionally. It lowers the temperature of the classroom. It reduces the cops-and-robbers dynamic between students and teachers. It turns AI from an invisible contaminant into an object of reflection, discipline, and responsibility.

Most importantly, it matches the world students are actually entering.

The student of the future will not be the one who avoids AI completely. Nor will it be the one who delegates everything to AI. The student who matters most will be the one who can guide, interrogate, verify, refine, and stand behind an AI-augmented process.

That is what education should be cultivating now.

Not pure authorship as a myth.

Not detection as a ritual.

But stewardship as a practice.

The new standard should be simple:

show your process, disclose your tools, verify your claims, document your corrections, and take responsibility for the final work.

That is the shift.

And that is the missing standard in education.

Marcelo Maciel Amaral is a research scientist and founder writing on AI stewardship, verification, and the future of accountable human-AI collaboration.